MASAI: Multi-agent Summative Assessment Improvement for Unsupervised Environment Design

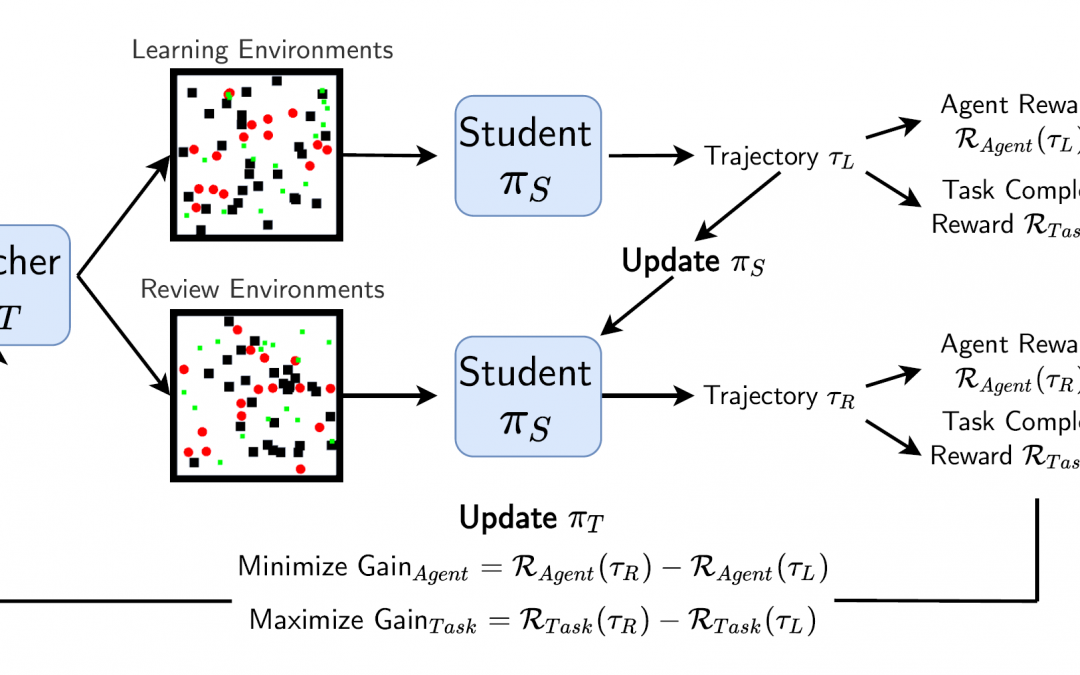

Reinforcement Learning agents require a distribution of environments for their policy to be trained on. The method or process of defining these environments directly impacts robustness and generalization of the learned agent policies. In single agent reinforcement learning, this problem is often solved by domain randomization, or randomizing the environment and tasks within the scope of the desired operating domain of the agent. The challenge here is to generate both structured and solvable environments that guide the agent’s learning process. In this work, we aim to automatically generate environments that are solvable and challenging for the continuous multi-agent setting. We base our solution on the Teacher-Student relationship with parameter sharing Students where we re-imagine the Teacher as an environment generator for UED. Our approach uses one environment generator agent (Teacher) for any number of learning agents (Students). We qualitatively and quantitatively demonstrate that, in terms of multi-agent (≥ 8 agents) navigation and steering, Students trained by our approach outperform agents using heuristic search, as well as agents trained by domain randomization.